…Similar faults have seen voters expunged from electoral rolls without notice, small businesses labeled as ineligible for government contracts, and individuals mistakenly identified as “deadbeat†parents. In a notable example of the latter, 56-year-old mechanic Walter Vollmer was incorrectly targeted by the Federal Parent Locator Service and issued a child-support bill for the sum of $206,000. Vollmer’s wife of 32 years became suicidal in the aftermath, believing that her husband had been leading a secret life for much of their marriage.

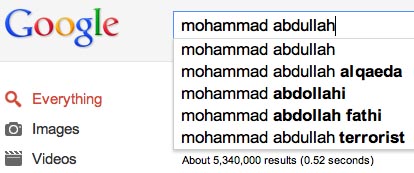

Equally alarming is the possibility that an algorithm may falsely profile an individual as a terrorist: a fate that befalls roughly 1,500 unlucky airline travelers each week. Those fingered in the past as the result of data-matching errors include former Army majors, a four-year-old boy, and an American Airlines pilot—who was detained 80 times over the course of a single year.

Many of these problems are the result of the new roles algorithms play in law enforcement. As slashed budgets lead to increased staff cuts, automated systems have moved from simple administrative tools to become primary decision-makers.

via Algorithms Are Great and All, But They Can Also Ruin Lives | WIRED.